While the normalization breaks the raw data for protocol sake, without considering the functional needs, denormalization is done only for serving the application - of course, with care, excessive denormalization can cause more damage than solutions. If it were to notice the most frequent operation - read - it may be said that any application is a search application this is why the modeling process needs to consider the entities which are logically handled (upon the search is made), something like: articles, user information, car specification, etc. The raw data must be analyzed from a different point of view in this context, more precisely, from the point of view of the application needs, making in this way the used database application-oriented?. However, the support offered by the big data platforms for unstructured data must not be confused with the lack of need for data modeling. When it comes to data modeling in the big data context (especially MarkLogic), there is no universally recognized form in which you must fit the data, on the contrary, the schema concept is no longer applied. Moreover, even the lexicon and the etymology sustain this practice - the fragmented data are considered "normal". In the same way, after the normalization, the data needs to be brought back together for the sake of the application the data, which was initially altogether, have been fragmented and now it needs to be recoupled once again - it seems rather redundant, but it is the most used approach from the last decades, especially when working with relational databases. In the article "Denormalizing Your Way to Speed and Profit", appears a very interesting comparison between data modeling and philosophy: Descartes"s principle - widely accepted (initially) - of mind and body separation looks an awful like the normalization process - separation of data Descartes"s error was to separate (philosophically) two parts which were always together.

Most of the time, normalization is a good practice for at least two reasons: it frees your data of integrity issues on alteration tasks (inserts, updates, deletes), it avoids bias towards any query model. About normalizationĭata normalization is part of the process of data modeling for creating an application. In this article I am going to approach the dualism of the concepts of normalizing and denormalizing in the big data context, taking into account my experience with MarkLogic (a platform for big data applications). Nowadays, "big data" is in trend, but it is also a situation when more and more applications need to handle: the volume, the velocity, the variety, and the complexity of the data (according to Gartner"s definition). However, ever since college, things have changed, we do not hear so much about relational databases, although they are still used with predominance in applications.

and that is a good practice, it also means that the semesters studying databases paid off and affected your way of thinking and working with data.

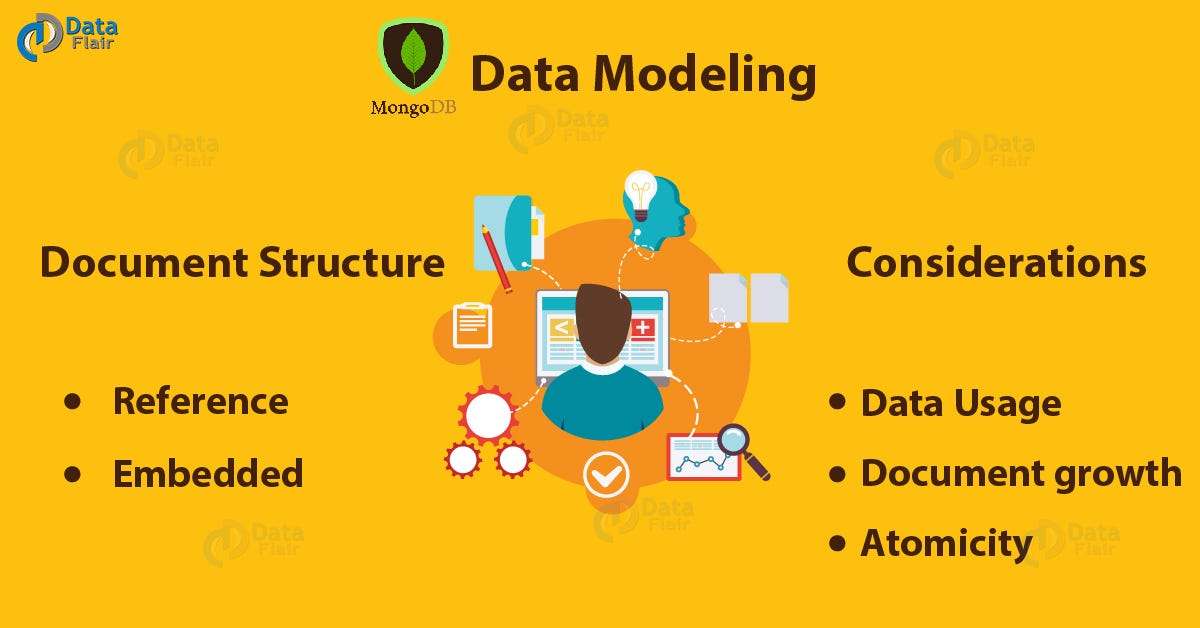

When someone says "data modeling", everyone thinks automatically to relational databases, to the process of normalizing the data, to the 3rd normal form etc.